Cluster analysis

Clustering is the classification of objects into different groups, or more precisely, the partitioning of a data set into subsets (clusters), so that the data in each subset (ideally) share some common trait - often proximity according to some defined distance measure. Data clustering is a common technique for statistical data analysis, which is used in many fields, including machine learning, data mining, pattern recognition, image analysis and bioinformatics.

Machine learning typically regards data clustering as a form of unsupervised learning.

Besides the term data clustering (or just clustering), there are a number of terms with similar meanings, including cluster analysis, automatic classification, numerical taxonomy, botryology and typological analysis.

Types of clustering

Data clustering algorithms can be hierarchical or partitional. Hierarchical algorithms find successive clusters using previously established clusters, whereas partitional algorithms determine all clusters at once. Hierarchical algorithms can be agglomerative ("bottom-up") or divisive ("top-down"). Agglomerative algorithms begin with each element as a separate cluster and merge them into successively larger clusters. Divisive algorithms begin with the whole set and proceed to divide it into successively smaller clusters.

Two-way clustering, co-clustering or bi-clustering are the names for clusterings where not only the objects are clustered but also the features of the objects, i.e., if the data is represented in a data matrix, the row and columns are clustered simultaneously.

Another important distinction is whether the clustering uses symmetric or asymmetric distances. A property of Euclidean space is that distances are symmetric (the distance from object A to B is the same as the distance from B to A). In other applications (e.g., sequence-alignment methods, see Prinzie & Van den Poel (2006)), this is not the case.

Distance measure

An important step in any clustering is to select a distance measure, which will determine how the similarity of two elements is calculated. This will influence the shape of the clusters, as some elements may be close to one another according to one distance and further away according to another. For example, in a 2-dimensional space, the distance between the point (x=1, y=0) and the origin (x=0, y=0) is always 1 according to the usual norms, but the distance between the point (x=1, y=1) and the origin can be 2, or 1 if you take respectively the 1-norm, 2-norm or infinity-norm distance.

Common distance functions:

- the Euclidean distance (also called distance as the crow flies or 2-norm distance). A review of cluster analysis in health psychology research found that the most common distance measure in published studies in that research area is the Euclidean distance or the squared Euclidean distance.

- the Manhattan distance (also called taxicab norm or 1-norm)

- the infinity-norm

- the Mahalanobis distance corrects data for different scales and correlations in the variables

Hierarchical clustering

Creating clusters

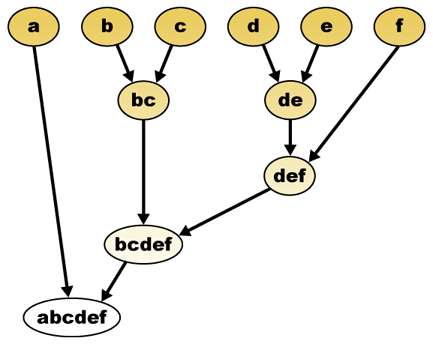

Hierarchical clustering builds (agglomerative), or breaks up (divisive), a hierarchy of clusters. The traditional representation of this hierarchy is a tree (called a dendrogram), with individual elements at one end and a single cluster containing every element at the other. Agglomerative algorithms begin at the top of the tree, whereas divisive algorithms begin at the bottom. (In the figure, the arrows indicate an agglomerative clustering.)

Cutting the tree at a given height will give a clustering at a selected precision. In the following example, cutting after the second row will yield clusters {a} {b c} {d e} {f}. Cutting after the third row will yield clusters {a} {b c} {d e f}, which is a coarser clustering, with a fewer number of larger clusters.

Agglomerative hierarchical clustering

For example, suppose this data is to be clustered, and the euclidean distance is the distance metric.

The hierarchical clustering dendrogram would be as such:

This method builds the hierarchy from the individual elements by progressively merging clusters. In our example, we have six elements {a} {b} {c} {d} {e} and {f}. The first step is to determine which elements to merge in a cluster. Usually, we want to take the two closest elements, according to the chosen distance.

Optionally, one can also construct a distance matrix at this stage, where the number in the i-th row j-th column is the distance between the i-th and j-th elements. Then, as clustering progresses, rows and columns are merged as the clusters are merged and the distances updated. This is a common way to implement this type of clustering, and has the benefit of caching distances between clusters.

Suppose we have merged the two closest elements b and c, we now have the following clusters {a}, {b, c}, {d}, {e} and {f}, and want to merge them further. To do that, we need to take the distance between {a} and {b c}, and therefore define the distance between two clusters. Usually the distance between two clusters and is one of the following:

- The maximum distance between elements of each cluster (also called complete linkage clustering):

- The minimum distance between elements of each cluster (also called single linkage clustering):

- The mean distance between elements of each cluster (also called average linkage clustering):

- The sum of all intra-cluster variance

- The increase in variance for the cluster being merged (Ward's criterion)

- The probability that candidate clusters spawn from the same distribution function (V-linkage)

Each agglomeration occurs at a greater distance between clusters than the previous agglomeration, and one can decide to stop clustering either when the clusters are too far apart to be merged (distance criterion) or when there is a sufficiently small number of clusters (number criterion).

Concept clustering

Another variation of the agglomerative clustering approach is conceptual clustering.

Partitional clustering

k-means and derivatives

K-means clustering

The K-means algorithm assigns each point to the cluster whose center (also called centroid) is nearest. The center is the average of all the points in the cluster — that is, its coordinates are the arithmetic mean for each dimension separately over all the points in the cluster.

- Example: The data set has three dimensions and the cluster has two points: X = (x1, x2, x3) and Y = (y1, y2, y3). Then the centroid Z becomes Z = (z1, z2, z3), where z1 = (x1 + y1)/2 and z2 = (x2 + y2)/2 and z3 = (x3 + y3)/2.

The algorithm steps are (J. MacQueen, 1967):

- Choose the number of clusters, k.

- Randomly generate k clusters and determine the cluster centers, or directly generate k random points as cluster centers.

- Assign each point to the nearest cluster center.

- Recompute the new cluster centers.

- Repeat the two previous steps until some convergence criterion is met (usually that the assignment hasn't changed).

The main advantages of this algorithm are its simplicity and speed which allows it to run on large datasets. Its disadvantage is that it does not yield the same result with each run, since the resulting clusters depend on the initial random assignments. It maximizes inter-cluster (or minimizes intra-cluster) variance, but does not ensure that the result has a global minimum of variance.

QT clustering algorithm

QT (quality threshold) clustering (Heyer et al, 1999) is an alternative method of partitioning data, invented for gene clustering. It requires more computing power than k-means, but does not require specifying the number of clusters a priori, and always returns the same result when run several times.

The algorithm is:

- The user chooses a maximum diameter for clusters.

- Build a candidate cluster for each point by including the closest point, the next closest, and so on, until the diameter of the cluster surpasses the threshold.

- Save the candidate cluster with the most points as the first true cluster, and remove all points in the cluster from further consideration.

- Recurse with the reduced set of points.

The distance between a point and a group of points is computed using complete linkage, i.e. as the maximum distance from the point to any member of the group (see the "Agglomerative hierarchical clustering" section about distance between clusters).

Fuzzy c-means clustering

In fuzzy clustering, each point has a degree of belonging to clusters, as in fuzzy logic, rather than belonging completely to just one cluster. Thus, points on the edge of a cluster, may be in the cluster to a lesser degree than points in the center of cluster. For each point x we have a coefficient giving the degree of being in the kth cluster . Usually, the sum of those coefficients is defined to be 1:

With fuzzy c-means, the centroid of a cluster is the mean of all points, weighted by their degree of belonging to the cluster:

The degree of belonging is related to the inverse of the distance to the cluster

then the coefficients are normalized and fuzzyfied with a real parameter so that their sum is 1. So

For m equal to 2, this is equivalent to normalising the coefficient linearly to make their sum 1. When m is close to 1, then cluster center closest to the point is given much more weight than the others, and the algorithm is similar to k-means.

The fuzzy c-means algorithm is very similar to the k-means algorithm:

- Choose a number of clusters.

- Assign randomly to each point coefficients for being in the clusters.

- Repeat until the algorithm has converged (that is, the coefficients' change between two iterations is no more than , the given sensitivity threshold) :

- Compute the centroid for each cluster, using the formula above.

- For each point, compute its coefficients of being in the clusters, using the formula above.

The algorithm minimizes intra-cluster variance as well, but has the same problems as k-means, the minimum is a local minimum, and the results depend on the initial choice of weights. The Expectation-maximization algorithm is a more statistically formalized method which includes some of these ideas: partial membership in classes. It has better convergence properties and is in general more preferred to fuzzy-k-means.

Graph-theoretic methods

Formal concept analysis is a technique for generating clusters of objects and attributes, given a bipartite graph representing the relations between the objects and attributes. Other methods for generating overlapping clusters (a cover rather than a partition) are discussed by Jardine and Sibson (1968) and Cole and Wishart (1970).

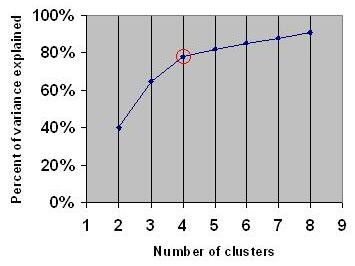

Elbow criterion

The elbow criterion is a common rule of thumb to determine what number of clusters should be chosen, for example for k-means and agglomerative hierarchical clustering. It should also be noted that the initial assignment of cluster seeds has bearing on the final model performance. Thus, it is appropriate to re-run the cluster analysis multiple times.

The elbow criterion says that you should choose a number of clusters so that adding another cluster doesn't add sufficient information. More precisely, if you graph the percentage of variance explained by the clusters against the number of clusters, the first clusters will add much information (explain a lot of variance), but at some point the marginal gain will drop, giving an angle in the graph (the elbow). This elbow can not always be unambigiously identified.

On the following graph, the elbow is indicated by the red circle. The number of clusters chosen should therefore be 4. Percent of variance explained or percent of total variance is the ratio of within-group variance to total variance. With XLStat 7.5 (http://www.xlstat.com/en/home) (Excel Add-in) you can find within-group, between-group and total variance for each clustering analysis, if you select the "variance" item in the k-means or hierechical clustering dialog box. It seems this item gets the variance, including effects of clustering variables.

Spectral clustering

Given a set of data points A, the similarity matrix may be defined as a matrix where represents a measure of the similarity between points . Spectral clustering techniques make use of the spectrum of the similarity matrix of the data to cluster the points. Sometimes such techniques are also used to perform dimensionality reduction for clustering in fewer dimensions.

One such technique is the Shi-Malik algorithm, commonly used for image segmentation. It partitions points into two sets based on the eigenvector corresponding to the second-smallest eigenvalue of the Laplacian matrix

of , where is the diagonal matrix

This partitioning may be done in various ways, such as by taking the median of the components in , and placing all points whose component in is greater than in , and the rest in . The algorithm can be used for hierarchical clustering, by repeatedly partitioning the subsets in this fashion.

A related algorithm is the Meila-Shi algorithm, which takes the eigenvectors corresponding to the k largest eigenvalues of the matrix for some k, and then invokes another (e.g. k-means) to cluster points by their respective k components in these eigenvectors.

Applications

Biology

In biology clustering has many applications

- In the fields of plant and animal ecology, clustering is used to describe and to make spatial and temporal comparisons of communities (assemblages) of organisms in heterogeneous environments; it is also used in plant systematics to generate artificial phylogenies or clusters of organisms (individuals) at the species, genus or higher level that share a number of attributes

- In computational biology and bioinformatics:

- In transcriptomics, clustering is used to build groups of genes with related expression patterns. Often such groups contain functionally related proteins, such as enzymes for a specific pathway, or genes that are co-regulated. High throughput experiments using expressed sequence tags (ESTs) or DNA microarrays can be a powerful tool for genome annotation, a general aspect of genomics.

- In sequence analysis, clustering is used to group homologous sequences into gene families. This is a very important concept in bioinformatics, and evolutionary biology in general. See evolution by gene duplication.

Market research

Cluster analysis is widely used in market research when working with multivariate data from surveys and test panels. Market researchers use cluster analysis to partition the general population of consumers into market segments and to better understand the relationships between different groups of consumers/potential customers.

- Segmenting the market and determining target markets

- Product positioning

- New product development

- Selecting test markets (see : experimental techniques)

Other applications

Social network analysis: In the study of social networks, clustering may be used to recognize communities within large groups of people.

Image segmentation: Clustering can be used to divide a digital image into distinct regions for border detection or object recognition.

Data mining: Many data mining applications involve partitioning data items into related subsets; the marketing applications discussed above represent some examples. Another common application is the division of documents, such as World Wide Web pages, into genres.

Slippy map optimization: Flickr's map of photos and other map sites use clustering to reduce the number of markers on a map. This makes it both faster and reduces the amount of visual clutter.

Comparisons between data clusterings

There have been several suggestions for a measure of similarity between two clusterings. Such a measure can be used to compare how well different data clustering algorithms perform on a set of data. Many of these measures are derived from the matching matrix (aka confusion matrix), e.g., the Rand measure and the Fowlkes-Mallows Bk measures.

Algorithms

In recent years considerable effort has been put into improving algorithm performance (Z. Huang, 1998). Among the most popular are CLARANS (Ng and Han,1994), DBSCAN (Ester et al., 1996) and BIRCH (Zhang et al., 1996).

See also

- k-means

- Artificial neural network (ANN)

- Self-organizing map

- Expectation Maximization (EM)

- Multidimensional scaling

Bibliography

- Abdi, H (1990). "(1994). Additive-tree representations (with an application to face processing) Lecture Notes in Biomathematics, 84, 43-59".

{{cite journal}}: Cite has empty unknown parameter:|1=(help); Cite journal requires|journal=(help); External link in|title= - Clatworthy, J., Buick, D., Hankins, M., Weinman, J., & Horne, R. (2005). The use and reporting of cluster analysis in health psychology: A review. British Journal of Health Psychology 10: 329-358.

- Cole, A. J. & Wishart, D. (1970). An improved algorithm for the Jardine-Sibson method of generating overlapping clusters. The Computer Journal 13(2):156-163.

- Ester, M., Kriegel, H.P., Sander, J., and Xu, X. 1996. A density-based algorithm for discovering clusters in large spatial databases with noise. Proceedings of the 2nd International Conference on Knowledge Discovery and Data Mining, Portland, Oregon, USA: AAAI Press, pp. 226–231.

- Heyer, L.J., Kruglyak, S. and Yooseph, S., Exploring Expression Data: Identification and Analysis of Coexpressed Genes, Genome Research 9:1106-1115.

- Huang, Z. (1998). Extensions to the K-means Algorithm for Clustering Large Datasets with ategorical Values. Data Mining and Knowledge Discovery, 2, p. 283-304.

- Jardine, N. & Sibson, R. (1968). The construction of hierarchic and non-hierarchic classifications. The Computer Journal 11:177.

- The on-line textbook: Information Theory, Inference, and Learning Algorithms, by David J.C. MacKay includes chapters on k-means clustering, soft k-means clustering, and derivations including the E-M algorithm and the variational view of the E-M algorithm.

- MacQueen, J. B. (1967). Some Methods for classification and Analysis of Multivariate Observations, Proceedings of 5-th Berkeley Symposium on Mathematical Statistics and Probability, Berkeley, University of California Press, 1:281-297

- Ng, R.T. and Han, J. 1994. Efficient and effective clustering methods for spatial data mining. Proceedings of the 20th VLDB Conference, Santiago, Chile, pp. 144–155.

- Prinzie A., D. Van den Poel (2006), Incorporating sequential information into traditional classification models by using an element/position-sensitive SAM. Decision Support Systems 42 (2): 508-526.

- Romesburg, H. Clarles, Cluster Analysis for Researchers, 2004, 340 pp. ISBN 1-4116-0617-5 or publisher, reprint of 1990 edition published by Krieger Pub. Co... A Japanese language translation is available from Uchida Rokakuho Publishing Co., Ltd., Tokyo, Japan.

- Zhang, T., Ramakrishnan, R., and Livny, M. 1996. BIRCH: An efficient data clustering method for very large databases. Proceedings of ACM SIGMOD Conference, Montreal, Canada, pp. 103–114.

For spectral clustering :

- Jianbo Shi and Jitendra Malik, "Normalized Cuts and Image Segmentation", IEEE Transactions on Pattern Analysis and Machine Intelligence, 22(8), 888-905, August 2000. Available on Jitendra Malik's homepage

- Marina Meila and Jianbo Shi, "Learning Segmentation with Random Walk", Neural Information Processing Systems, NIPS, 2001. Available from Jianbo Shi's homepage

For estimating number of clusters:

- Can, F., Ozkarahan, E. A. (1990) "Concepts and effectiveness of the cover coefficient-based clustering methodology for text databases." ACM Transactions on Database Systems. 15 (4) 483-517.

For discussion of the elbow criterion:

- Aldenderfer, M.S., Blashfield, R.K, Cluster Analysis, (1984), Newbury Park (CA): Sage.

External links

- P. Berkhin, Survey of Clustering Data Mining Techniques, Accrue Software, 2002.

- Jain, Murty and Flynn: Data Clustering: A Review, ACM Comp. Surv., 1999.

- for another presentation of hierarchical, k-means and fuzzy c-means see this introduction to clustering. Also has an explanation on mixture of Gaussians.

- David Dowe, Mixture Modelling page - other clustering and mixture model links.

- a tutorial on clustering [1]

- The on-line textbook: Information Theory, Inference, and Learning Algorithms, by David J.C. MacKay includes chapters on k-means clustering, soft k-means clustering, and derivations including the E-M algorithm and the variational view of the E-M algorithm.

- An overview of non-parametric clustering and computer vision